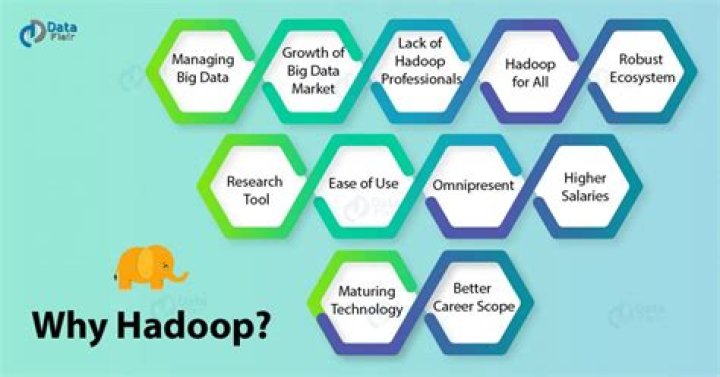

Why is Hadoop popular?

.

Thereof, is Hadoop still popular?

While Hadoop for data processing is by no means dead, Google shows that Hadoop hit its peak popularity as a search term in summer 2015 and its been on a downward slide ever since.

Likewise, is Hadoop Dead 2019? Businesses whose primary concern was dealing with Hadoop infrastructure like Cloudera and Hortonworks were seeing less and less adoption. This led to the eventual merger of the two companies in 2019, and the same message rang out from different corners of the world at the same time: 'Hadoop is dead.

why is Hadoop important?

Hadoop is an open-source software framework for storing data and running applications on clusters of commodity hardware. It provides massive storage for any kind of data, enormous processing power and the ability to handle virtually limitless concurrent tasks or jobs.

Why is Hadoop dying?

Hadoop isn't dying, it's plateaued and it's value has diminished. The analytics and database solutions that run on Hadoop do it because of the popularity of HDFS, which of course was designed to be a distributed file system. For that reason, you still see data warehouses used for analytics along-side or on top of HDFS.

Related Question AnswersDoes Google use Hadoop?

Hadoop is increasingly becoming the go-to framework for large-scale, data-intensive deployments. With web search, Google needed to be able to quickly access huge amounts of data distributed across a wide array of servers. Google developed Bigtable as a distributed storage system for managing structured data.What will replace Hadoop?

5 Best Hadoop Alternatives- Apache Spark- Top Hadoop Alternative. Spark is a framework maintained by the Apache Software Foundation and is widely hailed as the de facto replacement for Hadoop.

- Apache Storm. Apache Storm is another tool that, like Spark, emerged during the real-time processing craze.

- Ceph.

- Hydra.

- Google BigQuery.

Do I need Hadoop?

Hadoop for Data Science Answer to this question is a big YES! Hadoop is a must for Data Scientists. It also allows the users to store all forms of data, that is, both structured data and unstructured data. Hadoop also provides modules like Pig and Hive for analysis of large scale data.Does AWS use Hadoop?

Amazon Web Services uses the open-source Apache Hadoop distributed computing technology to make it easier to access large amounts of computing power to run data-intensive tasks. Hadoop, the open-source version of Google's MapReduce, is already being used by companies such as Yahoo and Facebook.Does Hadoop have a future?

Scope of Hadoop in the future In 2018, the global Big Data and business analytics market stood at US$ 169 billion and by 2022, it is predicted to grow to US$ 274 billion. Moreover, a PwC report predicts that by 2020, there will be around 2.7 million job postings in Data Science and Analytics in the US alone.Is Kafka part of Hadoop?

Hadoop is a open-source distributed framework used to store and process big data. While Kafka is a open source messaging service. Kafka is used to stream data in Hadoop cluster. The data is stored in HDFS and processed using either mapreduce or other Hadoop streaming framework.Why Hadoop is so popular in the industry?

The Ecosystem – why it's so popular Hadoop's core functionality is the driver of Hadoop's adoption. Many Apache side projects use it's core functions. Because of all those side projects Hadoop has turned more into an ecosystem. An ecosystem for storing and processing big data.What companies use Hadoop?

Here are top 12 hadoop technology companies expected to contribute to this fast-growing market:- Amazon Web Services. “Amazon Elastic MapReduce provides a managed, easy to use analytics platform built around the powerful Hadoop framework.

- Cloudera.

- ScienceSoft.

- Pivotal.

- Hortonworks.

- IBM.

- MapR.

- Microsoft.

Is Hadoop a DB?

What is Hadoop? Hadoop is not a type of database, but rather a software ecosystem that allows for massively parallel computing. It is an enabler of certain types NoSQL distributed databases (such as HBase), which can allow for data to be spread across thousands of servers with little reduction in performance.What are three features of Hadoop?

Here are a few key features of Hadoop:- Hadoop Brings Flexibility In Data Processing:

- Hadoop Is Easily Scalable.

- Hadoop Is Fault Tolerant.

- Hadoop Is Great At Faster Data Processing.

- Hadoop Ecosystem Is Robust:

- Hadoop Is Very Cost Effective.