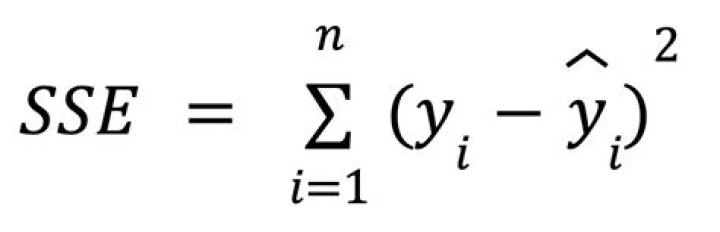

To calculate the sum of squares for error, start by finding the mean of the data set by adding all of the values together and dividing by the total number of values. Then, subtract the mean from each value to find the deviation for each value. Next, square the deviation for each value..

Similarly, it is asked, what is the SSE in statistics?

In statistics, the residual sum of squares (RSS), also known as the sum of squared residuals (SSR) or the sum of squared estimate of errors (SSE), is the sum of the squares of residuals (deviations predicted from actual empirical values of data). A small RSS indicates a tight fit of the model to the data.

Similarly, how do you calculate the sum of squares?

- Count the Number of Measurements.

- Calculate the Mean.

- Subtract Each Measurement From the Mean.

- Square the Difference of Each Measurement From the Mean.

- Add the Squares and Divide by (n - 1)

Moreover, what information can you use to compute the SSE?

The error sum of squares is obtained by first computing the mean lifetime of each battery type. For each battery of a specified type, the mean is subtracted from each individual battery's lifetime and then squared. The sum of these squared terms for all battery types equals the SSE. SSE is a measure of sampling error.

What does SSE stand for?

Scottish and Southern Energy

Related Question Answers

What is the meaning of SSE?

Short for Streaming SIMD Extensions, SSE is a processor technology that enables single instruction multiple data. On older processors only a single data element could be processed per instruction. However, SSE enables the instructions to handle multiple data elements.Can R Squared be more than 1?

Popular Answers (1) The calculation of R2 above the 1 represent abnormal case and has no logical meaning and may result from the small sample size.What is SSE in math?

Error Sum of Squares. Error Sum of Squares (SSE) SSE is the sum of the squared differences between each observation and its group's mean. It can be used as a measure of variation within a cluster. If all cases within a cluster are identical the SSE would then be equal to 0.Why do we need standard error?

The standard error of a statistic is the standard deviation of the sampling distribution of that statistic. Standard errors are important because they reflect how much sampling fluctuation a statistic will show. In general, the larger the sample size the smaller the standard error.Why do we sum of squares?

Besides simply telling you how much variation there is in a data set, the sum of squares is used to calculate other statistical measures, such as variance, standard error, and standard deviation. These provide important information about how the data is distributed and are used in many statistical tests.How do you get the variance?

To calculate variance, start by calculating the mean, or average, of your sample. Then, subtract the mean from each data point, and square the differences. Next, add up all of the squared differences. Finally, divide the sum by n minus 1, where n equals the total number of data points in your sample.What is the value of SSE?

SSE is the sum of squares due to error and SST is the total sum of squares. R-square can take on any value between 0 and 1, with a value closer to 1 indicating that a greater proportion of variance is accounted for by the model.How do you find the sum of cross products?

Sum of Cross Products. the sum of cross products is: 1 x 3 + 4 x 6 + 7 x 9 + 1 x 1 = 91.What is Y hat in regression?

Predicted Value Y-hat. Y-hat ( ) is the symbol that represents the predicted equation for a line of best fit in linear regression. The equation takes the form where b is the slope and a is the y-intercept. It is used to differentiate between the predicted (or fitted) data and the observed data y.What does total sum of squares mean?

In statistical data analysis the total sum of squares (TSS or SST) is a quantity that appears as part of a standard way of presenting results of such analyses. It is defined as being the sum, over all observations, of the squared differences between the observations and their overall mean.Is sum of squares the same as standard deviation?

The sum of squares, or sum of squared deviation scores, is a key measure of the variability of a set of data. The mean of the sum of squares (SS) is the variance of a set of scores, and the square root of the variance is its standard deviation.What is the sum of the first n squares?

Sum of the Squares of the First n Positive Integers (k−1)3=k3−3k2+3k−1.What is the regression sum of squares?

The regression sum of squares describes how well a regression model represents the modeled data. The regression type of sum of squares indicates how well the regression model explains the data. A higher regression sum of squares indicates that the model does not fit the data well.What is sum of squares between groups?

Between Group Variation Formula and is called the sum of squares between groups, or SS(B). This measures the interaction between the groups or samples. If the group means don't differ greatly from each other and the grand mean, the SS(B) will be small.Can you factor the sum of squares?

It's true that you can't factor A²+B² on the reals — meaning, with real-number coefficients — if A and B are just simple variables. So it's still true that a sum of squares can't be factored as a sum of squares on the reals.What is the formula for the sum of an arithmetic sequence?

The Sum Formula The formula says that the sum of the first n terms of our arithmetic sequence is equal to n divided by 2 times the sum of twice the beginning term, a, and the product of d, the common difference, and n minus 1. The n stands for the number of terms we are adding together.