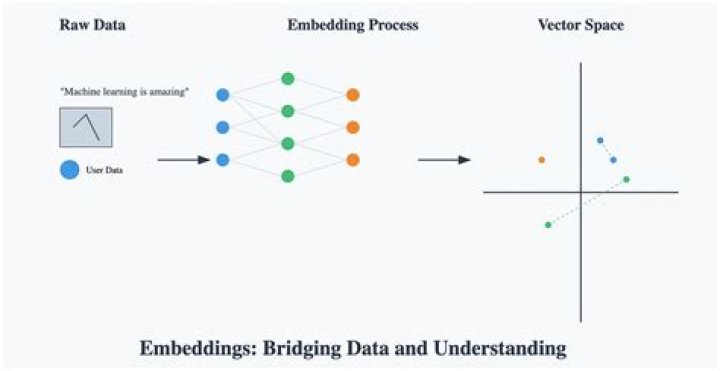

Embeddings. An embedding is a relatively low-dimensional space into which you can translate high-dimensional vectors. Embeddings make it easier to do machine learning on large inputs like sparse vectors representing words. An embedding can be learned and reused across models..

Keeping this in view, what is word embedding in deep learning?

A word embedding is a learned representation for text where words that have the same meaning have a similar representation. Each word is mapped to one vector and the vector values are learned in a way that resembles a neural network, and hence the technique is often lumped into the field of deep learning.

One may also ask, what is an embedding space? Embedding space is the space in which the data is embedded after dimensionality reduction. Its dimensionality is typically lower that than of the ambient space.

Similarly one may ask, what is the meaning of word embedding?

Word embedding is the collective name for a set of language modeling and feature learning techniques in natural language processing (NLP) where words or phrases from the vocabulary are mapped to vectors of real numbers.

What is the purpose of embedding?

Embedding is the process in which the tissues or the specimens are enclosed in a mass of the embedding medium using a mould. Since the tissue blocks are very thin in thickness they need a supporting medium in which the tissue blocks are embedded.

Related Question Answers

How is embedding done?

Looking at text data through the lens of Neural Nets By representing that data as lower dimensional vectors. These vectors are called Embedding. This technique is used to reduce the dimensionality of text data but these models can also learn some interesting traits about words in a vocabulary.What are embedding layers?

The Embedding layer is defined as the first hidden layer of a network. It must specify 3 arguments: It must specify 3 arguments: input_dim: This is the size of the vocabulary in the text data. For example, if your data is integer encoded to values between 0-10, then the size of the vocabulary would be 11 words.What is embedding model?

An embedding is a relatively low-dimensional space into which you can translate high-dimensional vectors. Ideally, an embedding captures some of the semantics of the input by placing semantically similar inputs close together in the embedding space. An embedding can be learned and reused across models.Is Word2Vec deep learning?

Introduction to Word2Vec Word2vec is a two-layer neural net that processes text. Its input is a text corpus and its output is a set of vectors: feature vectors for words in that corpus. While Word2vec is not a deep neural network, it turns text into a numerical form that deep nets can understand.Why is it called Skip gram?

1 Answer. Any code that iterates over 2*k target words, or 2*k context words, to create a total of 2*k (context-word)->(target-word) pairs for training, is "skip-gram". Each ordering is reasonably called 'skip-gram' and winds up with similar results, at the end of bulk training.Why is word2vec used?

The purpose and usefulness of Word2vec is to group the vectors of similar words together in vectorspace. That is, it detects similarities mathematically. Word2vec creates vectors that are distributed numerical representations of word features, features such as the context of individual words.What is word2vec model?

Word2vec is a group of related models that are used to produce word embeddings. Word2vec takes as its input a large corpus of text and produces a vector space, typically of several hundred dimensions, with each unique word in the corpus being assigned a corresponding vector in the space.What is embedding a link?

A little tougher to define, embedded links are just another way of saying a link that when clicked, leads somewhere else. Embedded links can be more than text though. You can embed an image as a link to another page on the web. You can create an embedded text link or an embedded image link.What is embedding in biology?

Biological embedding is the process by which experience gets under the skin and alters human biology and development. Systematic differences in experience in different social environments lead to different biological and developmental outcomes.What is embedding learning?

Embedded learning most simply describes learning while doing. Research indicates that embedded learning is more powerful than traditional approaches to learning because the learner is more motivated and engaged in completing a job or task, and also has a deeper understanding of context.What does Embedded * mean?

to surround tightly or firmly; envelop or enclose:Thick cotton padding embedded the precious vase in its box. to incorporate or contain as an essential part or characteristic:A love of color is embedded in all of her paintings.What is embedded structure?

Embedded structure is most commonly referred as nested structure so I ll use the word nested structure in answer. Nested structure in C is nothing but structure within structure. One structure can be declared inside other structure as we declare structure members inside a structure.What is the importance of embedding in sectioning?

Embedding is important in preserving tissue morphology and giving the tissue support during sectioning. Some epitopes may not survive harsh fixation or embedding. The tissue is typically cut into thin sections (5-10 µm) or smaller pieces (for whole mount studies) to facilitate further study.